ClickHouse Query Decomposition for Faster Metrics

Reduced heavy metrics query latency from ~8s to ~2s

Problem

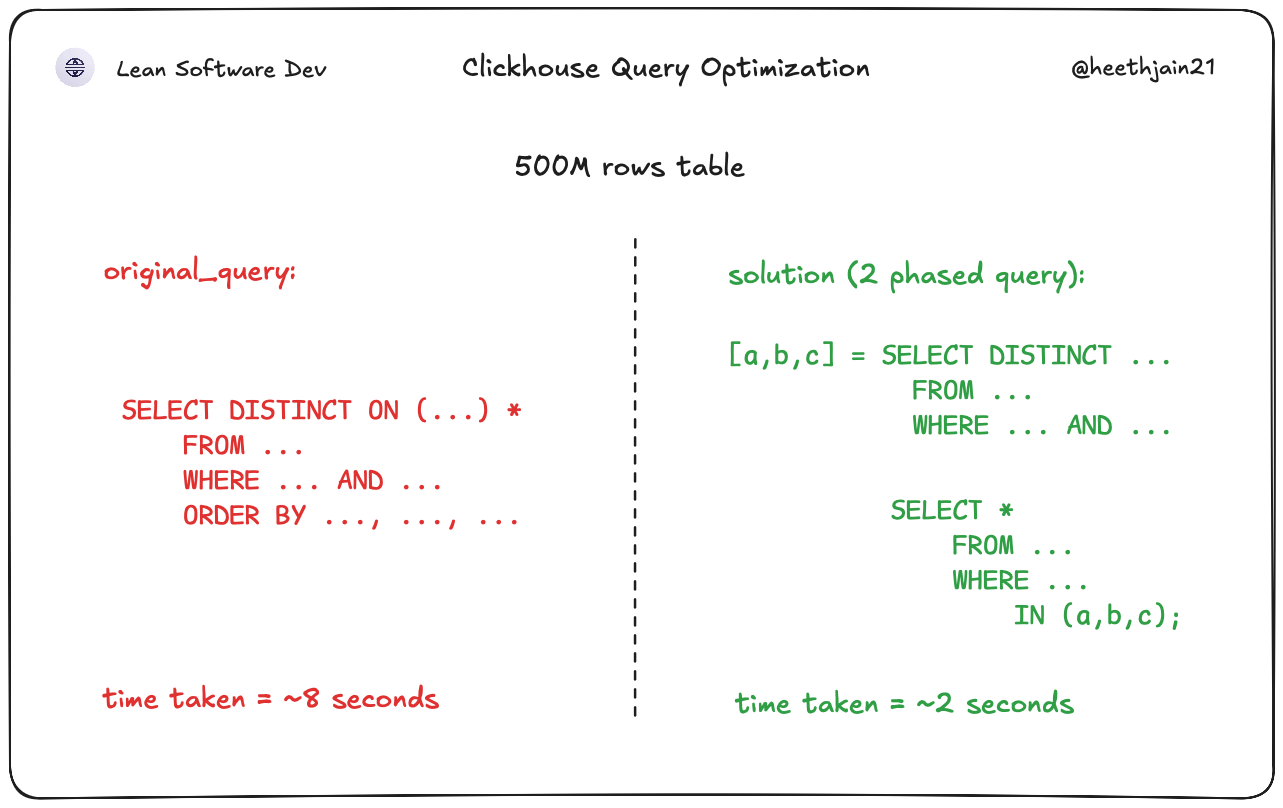

A Clickhouse query was taking ~8 seconds to execute which is long enough that most users would assume it was broken. The underlying query combined filtering, DISTINCT ON on multiple columns in one go, and no amount of index tuning would help.

Constraints

- Response time needed to drop significantly without waiting for a full data-model redesign.

- The data set was large enough that "textbook one-query optimization" was not enough in this case.

- The API had to keep producing the same business output for clients.

- The solution needed to be practical and safe to ship quickly.

Solution

- After some tinkering, replaced one heavyweight query with a two-step query plan.

Phase 1:

Query only distinct entity_id (single column) & values from the filtered asset pair using a narrow projection.

Phase 2:

Fetch full rows using the reduced entity_id list.

This intentionally decomposed the workload:

- Reduced row width during the expensive deduplication step.

- Minimized memory pressure and sorting cost.

- Converted part of the workload into targeted lookups.

Chose this over a single “clean” query because empirical execution behavior was significantly better under real data distribution.

More context

A ClickHouse query over a ~500M row historical dataset.

The query filtered by two asset columns (asset_a, asset_b) and used DISTINCT ON style logic to retrieve the latest row per entity_id. Because the table stored full historical snapshots, this required sorting and deduplicating a large working set of wide rows.

Outcome

- Reduced query latency from ~8s to ~2s.

- Eliminated user-facing timeout perception.

- Improved API stability under load.

- Demonstrated pragmatic optimization driven by measured execution behavior rather than theoretical query elegance.

Stack

- SQL

- ClickHouse